Platform Engineer

-

OpenTelemetry Achieves GA Status as 89% of Organizations Consolidate Observability Stacks

Disclosure: Some links in this article are affiliate links. We may earn a small commission at no extra cost to you. The…

-

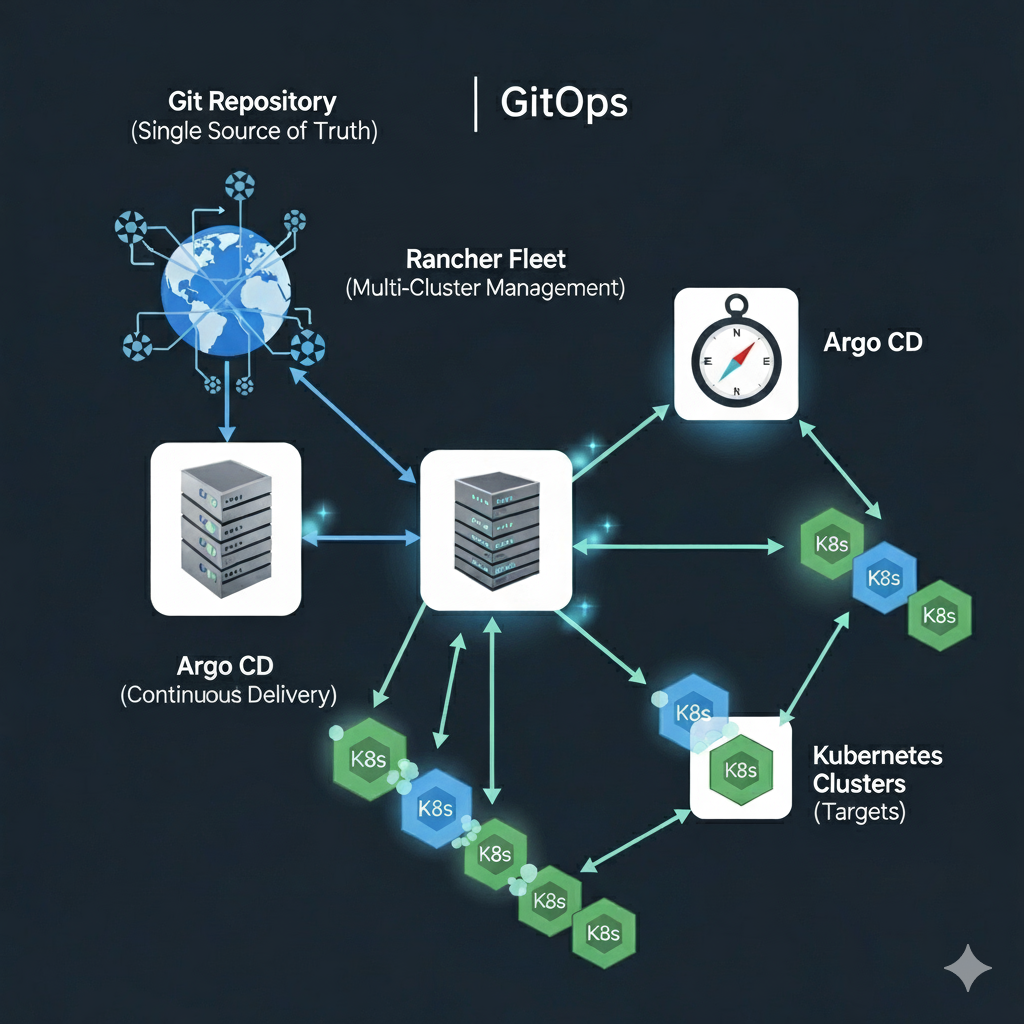

How do I: Manage Infrastructure at Scale with Rancher Fleet

Disclosure: Some links in this article are affiliate links. If you make a purchase through these links, we may earn a small…

-

How do I: Build a GitOps Pipeline in Argo

Disclosure: Some links in this article are affiliate links. We may earn a small commission at no extra cost to you. Part…

-

Mastering Harbor and ArgoCD Integration: A Complete Guide for Enterprise DevOps

Learn how to integrate Harbor container registry with ArgoCD for secure, scalable GitOps workflows. Complete guide covering setup, security best practices, common…

-

Enterprise GitOps with ArgoCD and Harbor on RKE2: Complete Integration Guide

Disclosure: Some links in this article are affiliate links. If you purchase through these links, we may earn a small commission at…

Search

Latest Posts

Latest Comments

No comments to show.